March 24, 2026

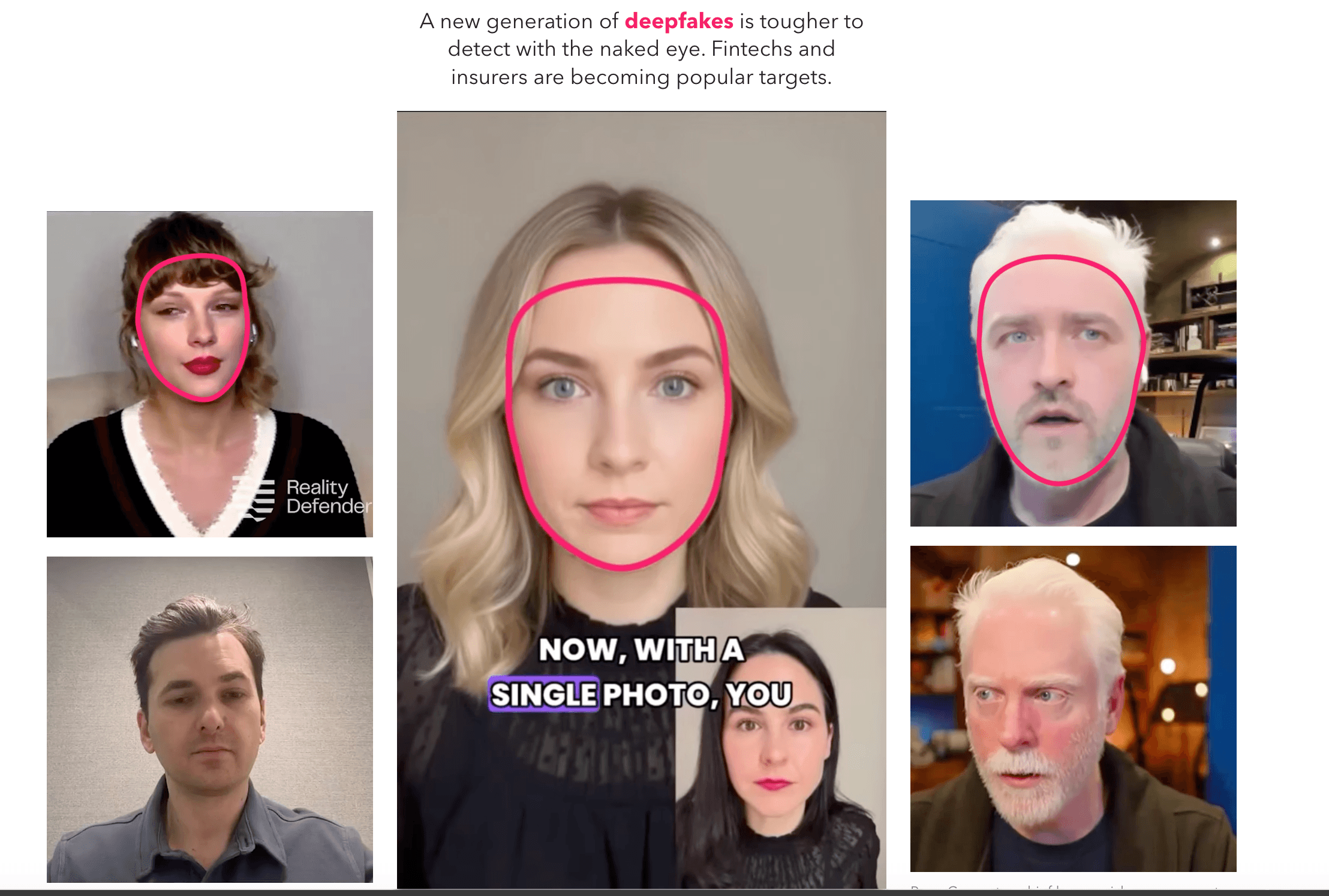

The landscape of corporate security has shifted dramatically as deepfake technology becomes more accessible and indistinguishably realistic. Recent tests conducted by cybersecurity firms like Reality Defendershow that even industry professionals struggle to identify AI-generated media, with a majority failing to distinguish real images and videos from synthetic ones. As the cost of these tools drops, bad actors are moving away from obvious "glitches" like extra fingers or monotone voices, instead producing near-perfect video and audio that can fool even the most cautious employees.

Financial institutions and insurance companies have become primary targets for these sophisticated scams. Modern attackers often use "multi-layered" deception, such as hacking an executive’s messaging account to schedule a high-priority video call. During these calls, AI-generated avatars of CEOs or partners are used to create a false sense of urgency, successfully coaxing staff into authorizing million-dollar wire transfers. These incidents are reportedly occurring with increasing frequency, though many remain undisclosed due to the lack of mandatory reporting laws for such digital heists.

In response to this growing threat, companies are being forced to look beyond traditional digital security tools. Experts suggest that "human-layer" defenses are now just as critical as software patches. This involves implementing physical verification protocols, such as requiring an employee to call an executive back on a trusted, "out-of-band" phone line to verify any request for a financial transfer or a password reset. The goal is to move away from a culture of implicit digital trust toward one of active, physical confirmation.

Ultimately, the danger of deepfakes lies not just in the immediate financial loss, but in the "fracturing of trust" within an organization. When employees can no longer be certain that the face on a Zoom screen or the voice on a voicemail is who they claim to be, the foundation of corporate communication begins to erode. Security leaders are now urging businesses to conduct simulated deepfake attacks on their own staff to build the psychological resilience needed to spot these high-tech ruses before they result in a catastrophic breach.

Read the article at: